Recently, I have been fascinated by the concept of generative music systems. So this edition will cover a few of those ideas, as well as how they interact with other pieces of data.

But before that, I have some work to announce! Although not generative in its creation, I just released an EP of music under the new musical moniker Saint Silva. This project will be a space to explore further sound experiments, alongside some of the generative themes discussed later in this newsletter. For this first release, I focused more on a traditional writing process by using analog instruments and field recordings. You can listen here, and read about how and why I produced the music at this blog post.

Inputs —> Outputs

At a very, very basic level, most of my work can be described as a series of inputs and outputs. I use data as an input, and a visual representation (chart, map, table, etc) becomes the output. When it comes to working with sound, the field of data sonification seems to be the immediate parallel to data visualization: instead of visualizations, you swap out audio for the output.

Data sonification has been used to great effect, especially with the ubiquity of podcasts these days. NASA has even used sonification to explore the galaxies:

But generative music systems are not necessarily about that. Rather than encode specific and measurable quantities into the data (and as a result, the audio output), generative music attempts to instead provide a set of rules for which to handle any given input. This input, often times, is also random in nature, which data sonification tends to stay away from.

For more on data sonification, I must mention here my friend Duncan’s brilliant podcast Loud Numbers, which he has crafted alongisde co-host Miriam Quick. Go check it out if you’re interested in seeing how data sonification is done. Also the Data Sonification Archive is quite a good treasure chest.

But onto generative music systems. What fascinates me about this approach to music is it’s emphasis on process over outcome. The “rules”, which translate to scales, tempo, notes, timbre, and other musical qualities, are the creative pursuit. Whatever happens after that is secondary. In this sense, there is no data input other than a set of rules to follow.

This approach to me appears to be one of deep curiosity, of constantly asking questions of the world and our systems. It uses bits of data, bytes of code, and logical if/then statements to create a piece of music that, otherwise, may not have existed if left to human hands alone.

The below links all relate to this way of thinking: of defining a set of rules to create a system that generates something else (in this case, music). I hope to continue finding other ways of implementing similar oblique strategies in the future.

Read (and explore)

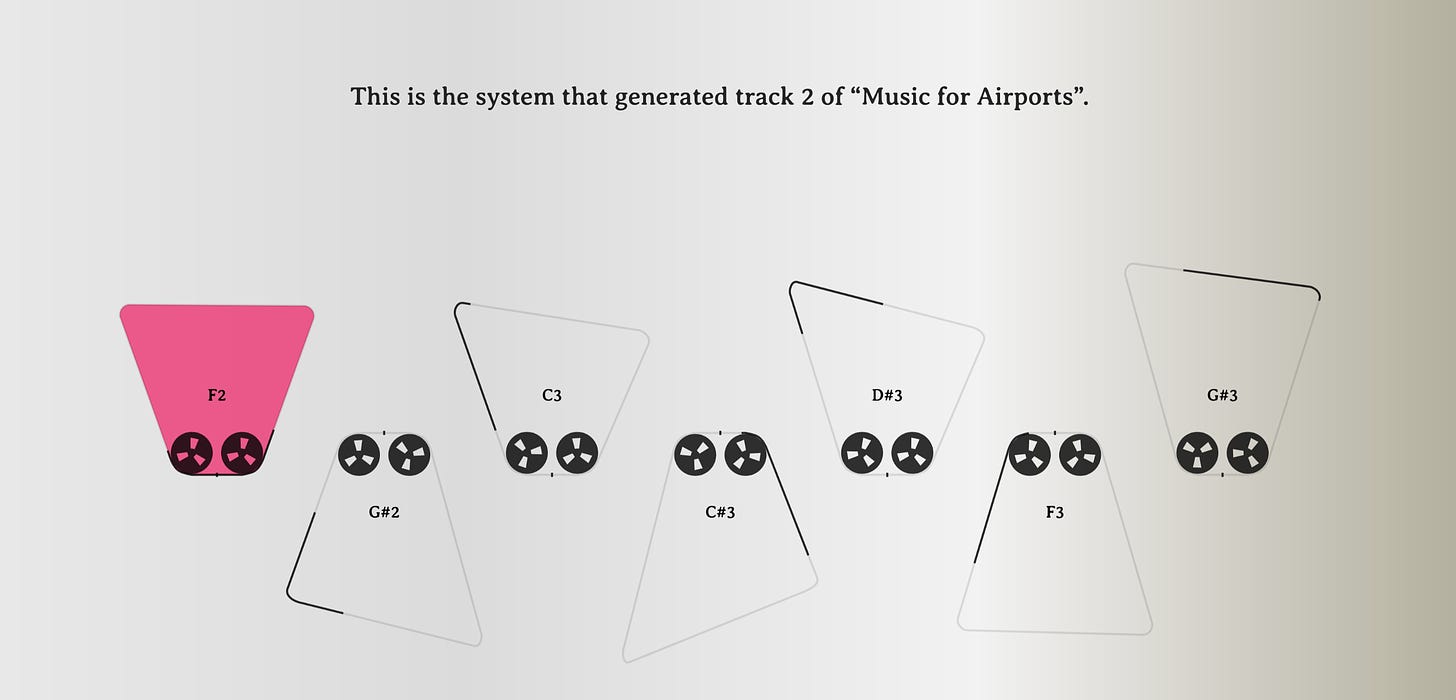

How Generative Music Works

This interactive web explainer created by Tero Parviainen is the best introduction to generative music, and generative systems (slightly different), that I have found. The interactive graphics throughout make for an intuitive way to learn and listen to seminal pieces from Steve Reich and Brian Eno as a way to better understand these concepts.

Explore (and listen)

I know it’s a touchy topic for some, so I’ll restrain my commentary on NFTs for another time. Regardless of your views, this NFT collaborative series “Rituals” presents an excellent example of the kind of art that generative systems are capable of producing. As explained in this thread, each art piece and accompanying soundtrack is created through programatically defined variables. And incredibly, it always creates a unique composition:

The musical composition does not repeat, and the artwork is subtly different each time you play it. If left running, we've tested that Rituals would continue generating music and artwork for ~9 million years.

Learn (and code)

♪♪♫♪ ♫♪♪♪♫♪ ♫♪♪♪♫♪ ♫♪ ♫♪ ♫♪

║░█░█░║░█░█░█░║░█░█░║

║░█░█░║░█░█░█░║░█░█░║

║░║░║░║░║░║░║░║░║░║░║

╚═╩═╩═╩═╩═╩═╩═╩═╩═╩═╝

I wanted to mix-and-match a few options for diving into generative systems with music, so leaving three different tutorials to get started below:

How to convert your data into MIDI in Python (also with some no-code options) (h/t Loud Numbers)

JavaScript Systems Music: Learning Web Audio by Recreating The Works of Steve Reich and Brian Eno